For the past two years, the tech industry has been locked in an "AI arms race." From keynote stages in Silicon Valley to the smallest app updates on the Play Store, the term "Artificial Intelligence" has become a ubiquitous, almost wearying prefix. We have been conditioned to expect AI as a cloud-dependent service—a magic trick performed on a remote server that requires a constant, high-speed internet connection to function.

For many users, this has led to "AI fatigue." Many touted features feel like gimmicks—transient novelties that users try once, find underwhelming, and promptly forget. Central to this skepticism is the concept of on-device AI. Critics have long argued that smartphones lack the raw computational overhead to compete with the massive data centers powering models like GPT-4 or Gemini Advanced. However, a quiet revolution is taking place, and it comes in the form of a lesser-known application: Google’s AI Edge Gallery.

Main Facts: The Shift to Localized Intelligence

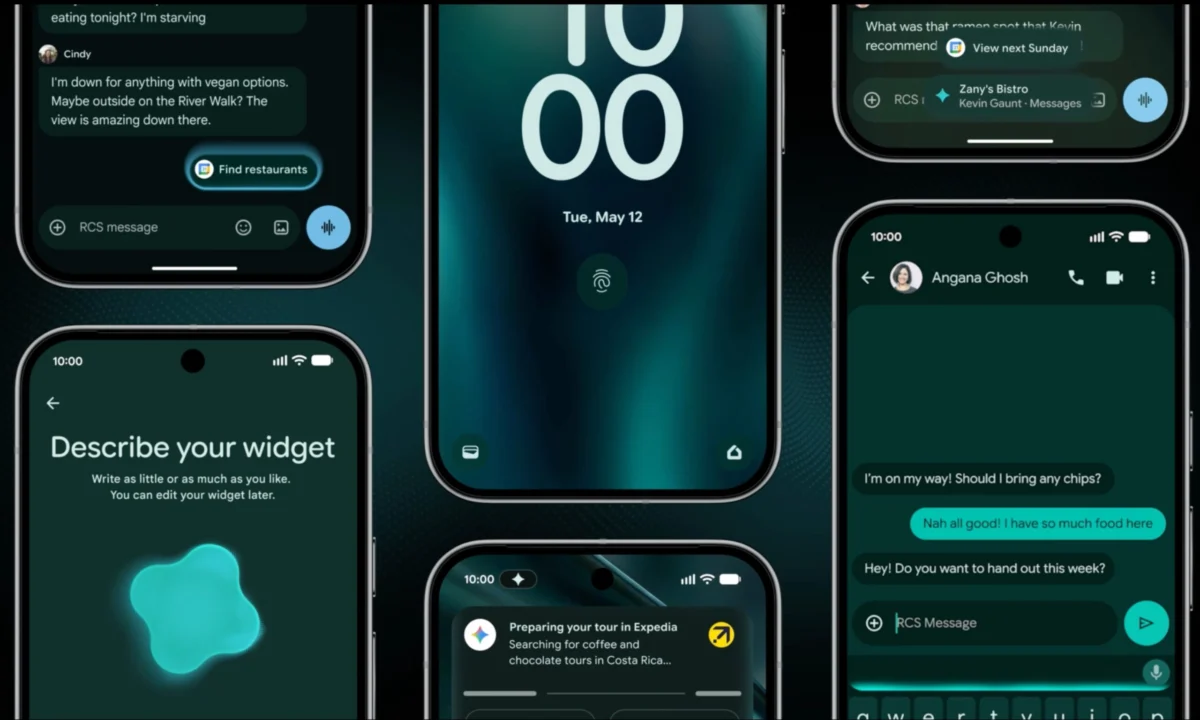

The Google AI Edge Gallery is not merely another chatbot app; it is a sandbox for the future of mobile computing. Launched initially as an experimental project, the app recently garnered significant attention following its integration with Gemma 4, Google’s latest and most capable open-source AI model.

The core premise of the app is simple yet revolutionary: it allows users to download sophisticated AI models directly onto their hardware. Once downloaded, these models operate entirely offline, independent of any cloud servers. Whether you are navigating a subway tunnel, trekking through remote terrain, or cruising at 32,000 feet, the AI remains fully functional. This capability transforms the smartphone from a portal to the cloud into a standalone, intelligent engine capable of complex reasoning, image analysis, and real-time translation without a single packet of data leaving the device.

Chronology: From Experimental Lab to Practical Tool

The journey of the AI Edge Gallery reflects the broader arc of Google’s mobile strategy:

- Mid-2025 (Initial Launch): Google releases the AI Edge Gallery as a niche, experimental platform aimed at developers and power users interested in exploring local inference on mobile hardware.

- Late 2025 (Hardware Maturation): As mobile silicon—specifically the Snapdragon 8 Elite Gen 5 and the latest iterations of Google’s own Tensor series—gains massive NPU (Neural Processing Unit) upgrades, the feasibility of running larger models on-device increases.

- Early 2026 (The Gemma 4 Update): The integration of Gemma 4 marks a turning point. The model’s efficiency-to-performance ratio allows for high-quality responses on mobile constraints, shifting the app from a "tech demo" to a functional tool.

- Mid-2026 (Hands-on Adoption): Real-world testing across diverse hardware—including the Pixel 10 Pro, iPhone Air, and Oppo Find X9 Ultra—reveals that while the software is powerful, the hardware utilization remains inconsistent across the Android ecosystem.

Supporting Data: Performance and Practical Application

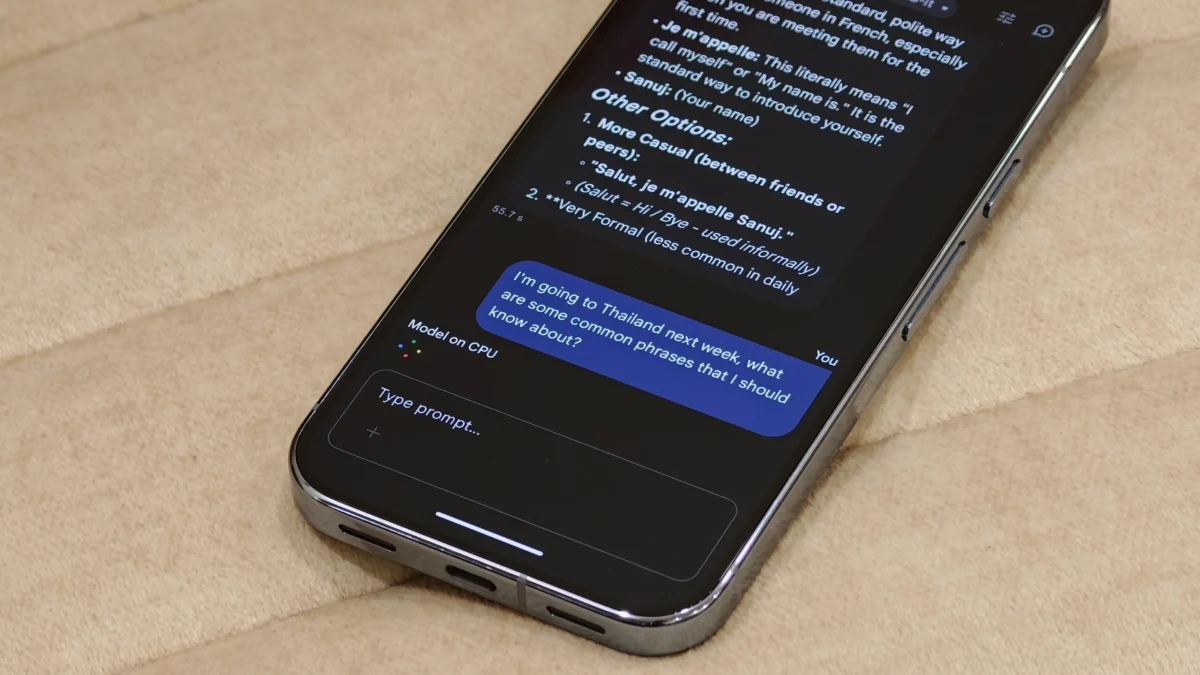

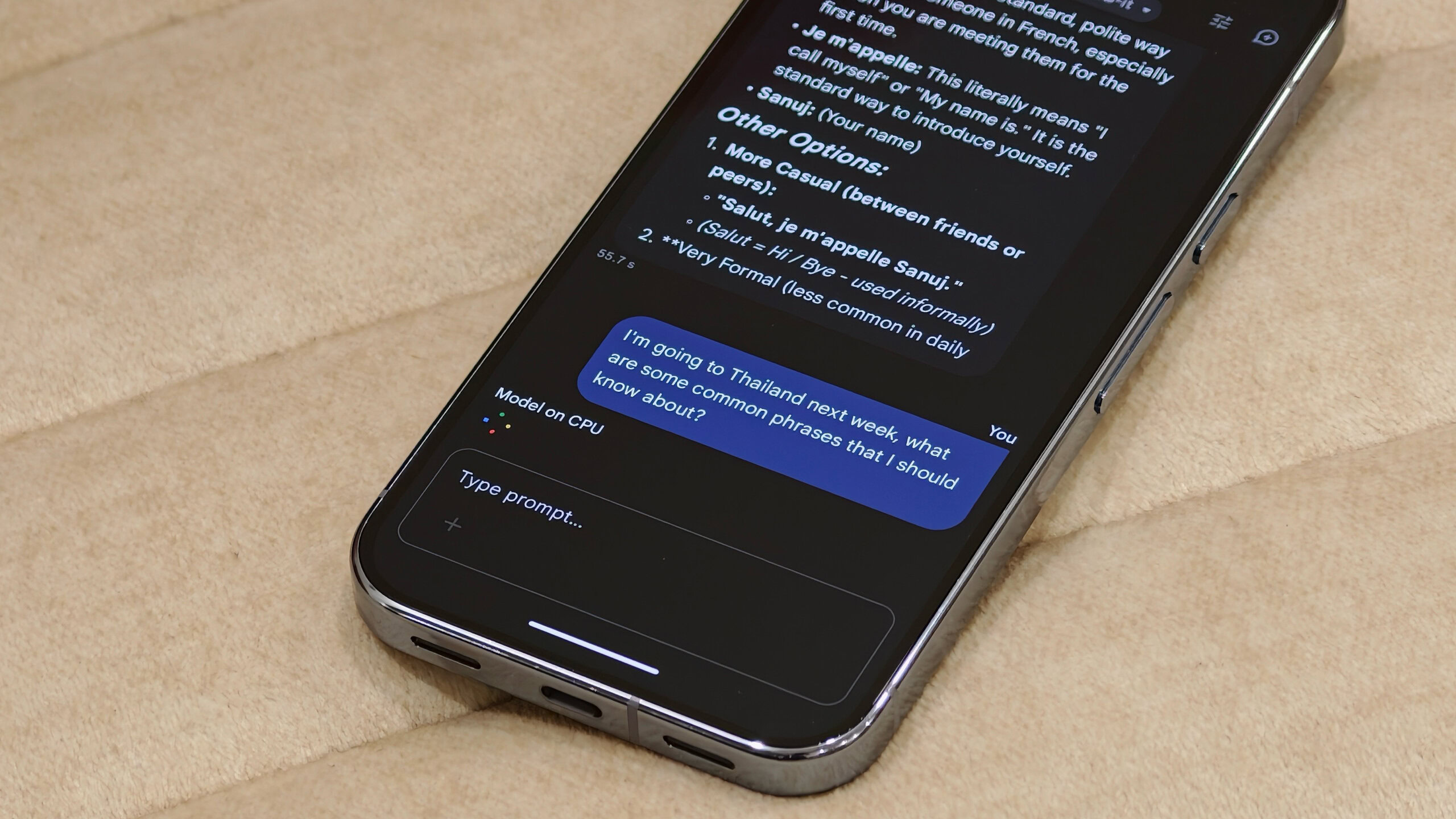

The utility of the AI Edge Gallery is best observed in scenarios where connectivity is non-existent. During a recent international flight to Thailand, the application served as a multi-modal assistant. By utilizing the offline chatbot feature, the author was able to solicit travel advice, translate local phrases, and even obtain summaries of in-flight movie options based on cached metadata.

Comparative Performance Metrics

The performance gap between devices reveals the disparity in how current mobile hardware handles on-device AI:

| Device Category | Hardware Utilization | Performance (Audio Task) |

|---|---|---|

| iPhone Air | GPU-accelerated | < 1 second |

| Oppo Find X9 Ultra | Snapdragon 8 Elite (GPU) | ~1.5 seconds |

| Pixel 10 Pro | CPU-fallback (Standard) | ~10 seconds |

The data highlights a critical bottleneck: the "AI Core" bridge. While the iPhone and certain high-end Snapdragon devices are already optimized to leverage local GPU power for these models, Google’s own flagship, the Pixel 10 Pro, currently relies on CPU processing unless the user is enrolled in specific beta programs. This disparity causes a tenfold difference in response time, illustrating that while the software is ready, the platform-level integration remains a work in progress.

Official Responses and Strategic Implications

Google has remained characteristically tight-lipped regarding specific performance optimizations for the AI Edge Gallery, though spokespeople have noted that "optimizing for heterogeneous compute environments is a primary focus for the 2027 hardware roadmap."

The implications for the industry are profound. By shifting the burden of computation from data centers to the device, Google is addressing three major concerns:

- Privacy: Data that never leaves the phone cannot be leaked or used for server-side training in ways the user hasn’t consented to.

- Latency: Removing the "round-trip" time to a cloud server allows for near-instantaneous processing of voice, text, and image data.

- Cost and Sustainability: Cloud-based AI is incredibly expensive and energy-intensive. Running models locally leverages the hardware users have already paid for, significantly reducing the environmental footprint of AI usage.

The Future of On-Device AI: Challenges and Opportunities

Despite the excitement surrounding the AI Edge Gallery, the transition to local-first AI is not without its hurdles.

The Persistence Problem

One of the most glaring issues for the average user is the lack of "state." Currently, the AI Edge Gallery does not save conversation threads. Unlike cloud-based platforms like ChatGPT or Gemini, where history is synced across devices, the Edge Gallery treats every interaction as a fresh start. This is largely due to hardware constraints regarding context memory (RAM), but it severely limits the app’s utility as a long-term personal assistant.

The Hardware Utilization Gap

The most pressing issue is the underutilization of the NPU on Android devices. As noted, the Pixel 10 Pro—a device designed by the same company creating the AI—should theoretically be the benchmark for this technology. The fact that it falls back to CPU processing, resulting in a 10-second wait time for simple tasks, is a failure of integration. This creates a "feature gap" where the user experience on iOS is currently more fluid than on Google’s native hardware.

Implications for the Consumer Market

Looking ahead, the success of the AI Edge Gallery will determine how OEMs market their future devices. If Google can standardize the "AI Core" to ensure that NPUs are consistently utilized across all Android devices, we will likely see a shift in smartphone marketing from "Megapixels" and "Refresh Rates" to "Tokens Per Second" (TPS) and "Local Model Capacity."

Conclusion

The Google AI Edge Gallery is a glimpse into a future where the smartphone is an truly independent intelligence. While it currently suffers from early-stage growing pains—specifically regarding memory management and inconsistent hardware utilization—it has successfully debunked the myth that on-device AI is inherently inferior.

Using an AI model at 32,000 feet, without a Wi-Fi connection, is not just a party trick; it is a fundamental shift in how we relate to our technology. When our devices stop being mere windows into a cloud-based web and start being local processing powerhouses, the "gimmick" of AI finally matures into a genuine utility. For now, the app remains a "hidden gem," but as Google refines its Tensor architecture and continues to open up NPU access, it may well become the blueprint for every smartphone on the market.

Summary of Key Takeaways:

- Offline Functionality: The AI Edge Gallery provides fully functional, multimodal AI without internet, proving that on-device models are viable for real-world tasks.

- Gemma 4 Integration: The use of Google’s latest open-source model allows for surprisingly competent performance on mobile hardware.

- The Hardware Hurdle: There is a significant discrepancy in performance between devices, with Android hardware—including the Pixel 10 Pro—lagging behind in NPU utilization compared to competitors.

- The Future Vision: Privacy, latency, and cost-efficiency will drive the next generation of mobile computing, moving away from cloud-dependent architectures toward localized, hardware-accelerated intelligence.