Robots are masters of repetition, consistency, and superhuman strength. In the automotive sector, they weld frames and assemble engines with sub-millimeter precision that no human hand could hope to replicate. Yet, for all their industrial prowess, robots have historically lacked a fundamental human capability: the delicate, intuitive sense of touch. When a robotic arm performs a task, it "sees" its environment through cameras and sensors, but it often lacks the haptic feedback required to understand the difference between a rigid metal surface and fragile biological tissue.

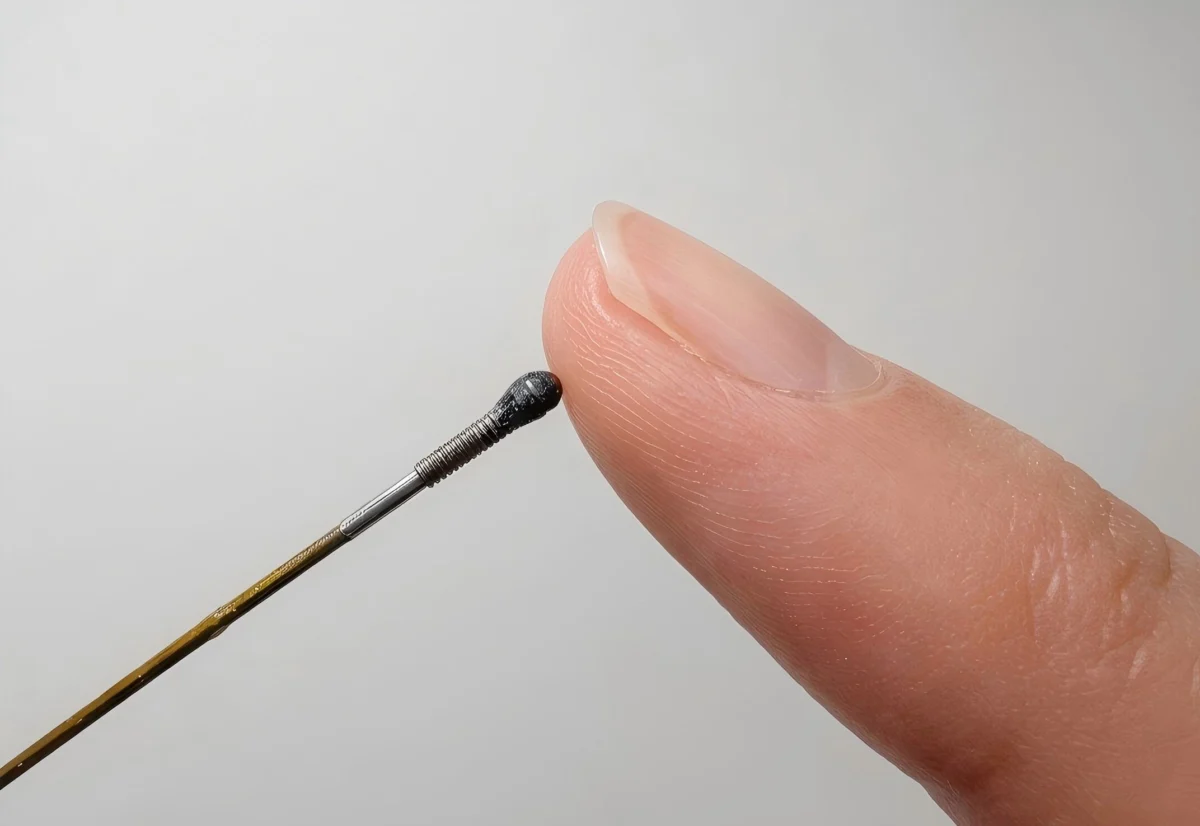

This technological blind spot has limited the potential of robotics in the most critical field of all: medicine. However, researchers at Shanghai Jiao Tong University have recently unveiled a breakthrough that could bridge this gap. By developing a force sensor roughly the size of a grain of rice—measuring just 1.7 millimeters in width—they have created a device capable of granting robots a "delicate touch." This innovation could fundamentally transform minimally invasive surgery, allowing robotic instruments to navigate the human body with an unprecedented level of tactile awareness.

The Mechanics of "Feeling": How the Sensor Works

The primary hurdle in miniaturizing tactile sensors has traditionally been the complexity of electronic components. To pack a force-sensing array into a space smaller than a fingertip, engineers typically face a nightmare of wiring, signal interference, and bulky hardware. The Shanghai Jiao Tong team circumvented this issue by abandoning traditional electronics entirely in favor of photonics.

A New Approach to Haptics

The sensor functions by harnessing the properties of light rather than electrical current. At the tip of an ultra-thin optical fiber sits a specialized, soft, deformable material. When this material interacts with an external force—whether it is a gentle press, a sliding motion, or a subtle twist—it physically changes shape.

This microscopic deformation alters the way light travels through the sensor. The resulting "distorted" light pattern is transmitted back through the optical fiber to a remote camera, which captures the light as a visual image. At this stage, a sophisticated machine learning model takes over. By analyzing the shifting light patterns, the AI reconstructs the exact nature and intensity of the force being applied. Essentially, the system has been trained to "see" touch, allowing it to interpret tactile data without the need for complex, miniaturized circuitry embedded directly at the point of contact.

Chronology: From Concept to Proof-of-Concept

The development of this sensor follows a rigorous path of academic inquiry aimed at solving the "feedback void" in modern robotics.

- Initial Research (Early 2024): Researchers began investigating optical-fiber-based deformation as a viable alternative to piezoresistive or capacitive sensors, which struggle with scalability at sub-millimeter sizes.

- Design and Prototyping (Late 2024): The team focused on the material science aspect, experimenting with various polymers that could maintain structural integrity while being sensitive enough to detect minute force changes.

- The "Gelatin" Trial (2025): The team conducted a pivotal experiment using a soft gelatin block. By hiding a small, rigid sphere beneath the surface of the gelatin—designed to simulate a tumor—the researchers proved that the sensor could identify density and stiffness variations that would be invisible to traditional cameras.

- Publication and Peer Review (May 2026): The technology was formally presented, detailing its efficacy in multi-directional force sensing and its potential for integration into standard surgical tools.

Supporting Data: Why Robots Need a Sense of Touch

In modern surgical suites, "minimally invasive" is the gold standard. Procedures like laparoscopies allow surgeons to operate through tiny incisions, reducing recovery time and infection risk. However, these procedures rely almost entirely on visual input.

A surgeon viewing a 4K monitor of the surgical site is often forced to rely on "visual intuition" to determine tissue health. They can see the surface, but they cannot feel the underlying stiffness that might indicate a hidden pathology, such as a deep-seated tumor or a fibrotic node.

Comparative Advantages of Optical Sensors:

- Miniaturization: At 1.7mm, the device is small enough for catheters and micro-grippers.

- EMI Resistance: Because the sensor relies on light rather than electricity, it is immune to electromagnetic interference—a major issue in operating rooms filled with various electronic monitoring systems.

- Simplicity: By offloading the "thinking" (data processing) to a remote AI model, the physical hardware at the surgical site is kept simple, durable, and inexpensive to produce.

The Road Ahead: Overcoming Engineering Hurdles

While the laboratory results are promising, the researchers are candid about the distance between a successful lab prototype and a tool that can be safely used on a human patient.

Scaling and Manufacturing

The greatest challenge moving forward is consistency. Manufacturing thousands of these sensors, each with the exact same optical properties and material elasticity, is a daunting task. In a laboratory setting, one-off builds are acceptable, but in the medical industry, mass production must adhere to extreme quality control standards. Any variance in the sensor’s sensitivity could result in inaccurate feedback, which, in a surgical context, is a high-stakes risk.

Clinical Validation

Before the device enters an operating room, it must undergo rigorous long-term stress testing. Surgical tools are subject to sterilization processes, exposure to bodily fluids, and repetitive mechanical strain. The sensor must prove that it can maintain its calibration throughout these demanding cycles.

Implications for the Future of Medicine

If successfully scaled, the implications of this technology are profound. Beyond simple surgery, this "synthetic sense of touch" could revolutionize the following areas:

1. Safer Robotic Surgeries

By providing surgeons with haptic feedback, the sensor could prevent accidental tissue trauma. If a robotic grasper detects that it is exerting too much pressure, the system could automatically adjust its grip or alert the surgeon, effectively adding a "safety buffer" to robotic interventions.

2. Enhanced Diagnostic Capability

The ability to detect subsurface density variations means that surgeons could perform "tactile mapping" of organs in real-time. This could allow for more precise biopsies, as the robot would be able to pinpoint the exact location of a tumor based on its stiffness, even if it is not visible to the naked eye.

3. Remote Tele-surgery

As the industry moves toward long-distance robotic surgery, the lack of touch is a major limitation. Haptic feedback is the missing piece of the puzzle that would allow a surgeon in one city to "feel" the resistance of tissue in another, making remote procedures as safe and intuitive as those performed in person.

Conclusion

The work coming out of Shanghai Jiao Tong University represents a shift in how we conceive of robotic interaction. By moving away from the "more electronics is better" philosophy and embracing the elegant simplicity of photonics, the team has opened a door to a new era of medical technology.

A grain-of-rice-sized sensor may seem like a minor advancement in the context of global robotics, but for a surgeon navigating the delicate, claustrophobic environment of the human body, it is a game-changer. As the researchers continue to refine the manufacturing process and move toward human-scale testing, we are inching closer to a future where robots don’t just assist in surgery—they act as an extension of the surgeon’s own hand, endowed with the sensitivity and nuance that once belonged solely to the human touch.

The path to clinical adoption remains long, involving years of regulatory hurdles and technical refinement. However, the proof-of-concept is clear: the future of precision medicine is not just in seeing better, but in finally being able to feel.