In the annals of hardware history, few series evoke as much nostalgia—and controversy—as MSI’s "Lightning" branding. Designed to represent the absolute pinnacle of engineering, these cards were intended to be the ultimate tools for enthusiasts and professional overclockers. However, the release of the MSI Radeon HD 6970 Lightning and the GeForce GTX 580 Lightning in 2011 serves as a cautionary tale of how high-end marketing can sometimes clash with real-world application. While these cards were visually striking and packed with enthusiast-grade features, they ultimately struggled to justify their premium price tags, falling short in noise management, functional BIOS design, and tangible performance gains.

The Genesis of the Lightning Series

In 2011, the graphics card market was defined by fierce competition between AMD’s Radeon HD 6970 and Nvidia’s GeForce GTX 580. MSI, looking to capture the "prosumer" and extreme-overclocker segment, positioned the Lightning series as the definitive versions of these GPUs. The strategy was clear: take the fastest chips available, attach a top-tier cooling solution, beef up the power delivery, and add a layer of software and hardware control that would appeal to those who live in the BIOS.

However, as early testing revealed, the gap between the "reference" cards provided by AMD and Nvidia and MSI’s heavily marketed "Lightning" editions was surprisingly narrow. What was meant to be a revolution in enthusiast hardware felt, for many, like a glorified factory overclock with a loud fan profile.

Technical Architecture: Twin Frozr III and Power Delivery

The centerpiece of both the HD 6970 and GTX 580 Lightning was the Twin Frozr III cooler. At the time, this was one of the most advanced cooling solutions on the market, featuring two 90mm PWM-controlled fans.

Cooling Discrepancies

Under the hood, the cooling implementation was surprisingly inconsistent. The Radeon HD 6970 Lightning featured a copper core to handle the heat dissipation from the AMD chip. In contrast, the GeForce GTX 580 Lightning utilized an aluminum plate to interface with the GPU die. Both solutions funneled heat through five high-performance heatpipes into an aluminum radiator array. While these components were of high quality, the cooling performance was marred by an aggressive fan profile that produced excessive noise levels, particularly on the Nvidia variant.

Power Delivery

MSI spared no expense in terms of electrical engineering. Both cards featured a robust 12-phase power supply for the GPU, paired with a 3-phase supply for the video memory. To feed these power-hungry circuits, MSI opted for dual 8-pin PCIe connectors. This setup was designed specifically to prevent voltage droop under extreme load—a common requirement for sub-zero overclocking sessions using liquid nitrogen or dry ice.

The BIOS Dilemma: Innovation or Confusion?

Perhaps the most peculiar aspect of the Lightning series was the multi-BIOS implementation. MSI marketed these cards as having the flexibility of switching between different profiles via a physical switch on the card.

The Radeon HD 6970 Implementation

The HD 6970 Lightning featured a three-position switch. In theory, this allowed for three distinct operating modes. In practice, however, MSI had only included two physical BIOS chips. The third position on the switch was entirely non-functional, resulting in a black screen and a panicked user. Furthermore, the two functional BIOS versions offered almost no tangible difference in behavior, serving only to vary the maximum allowable clock speeds for manual overclocking.

The GeForce GTX 580 Approach

The Nvidia-based Lightning fared slightly better, utilizing a genuine dual-BIOS setup. The secondary BIOS was designed to be a "silent" mode, which lowered clock speeds and utilized a less aggressive fan curve. While this was a step in the right direction, it failed to mitigate the card’s fundamental noise issues under heavy load.

Overclocking and Undocumented Features

For the intended audience—extreme overclockers—the Lightning boards were a playground. The PCB was littered with voltage measurement points, allowing users to attach a multimeter to monitor GPU, memory, and I/O voltages in real-time.

However, the cards also featured several mysterious, undocumented switches on the PCB. On the GeForce variant, these switches were rumored to:

- Disable Nvidia’s integrated power-protection features (which usually throttle performance when current draw is too high).

- Bypass the "cold bug," a phenomenon where GPUs fail to boot or operate at extreme sub-zero temperatures.

- Enable unknown, "secret" voltage control functions.

While these features were a goldmine for competitive overclockers looking to set world records, they were entirely inaccessible and confusing for the average consumer, adding to the product’s reputation as a "niche" device with poor usability.

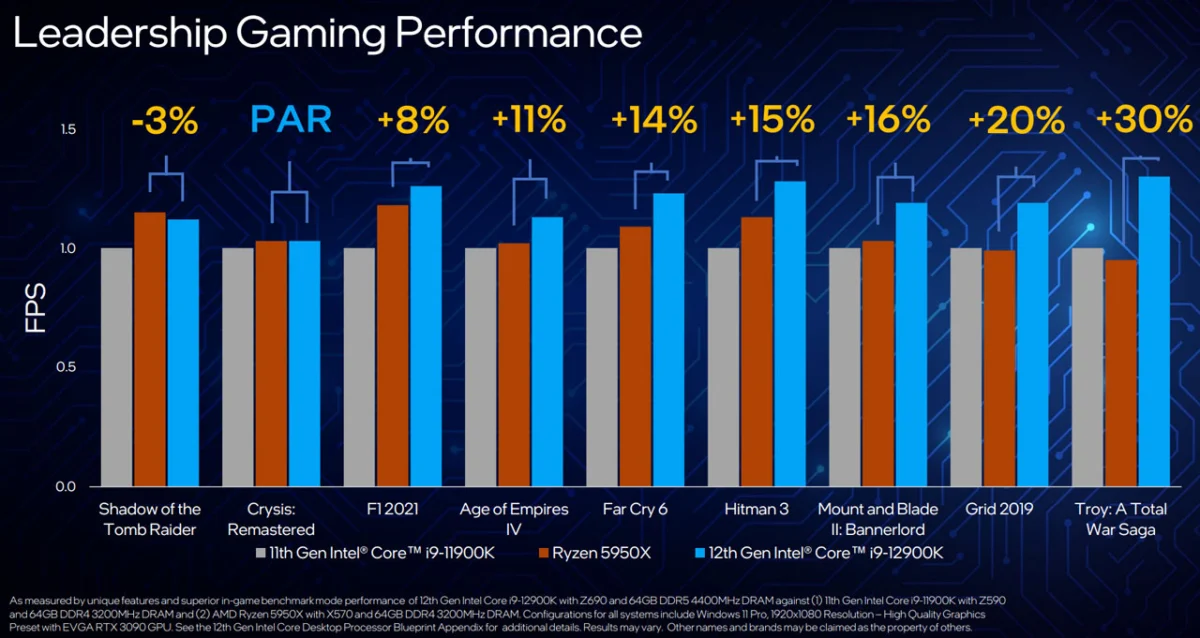

Performance Metrics: The "Measurable, Not Felt" Gap

When it came to raw performance, the Lightning series provided a minor uplift over the reference designs. In gaming tests at 2,560 x 1,600 pixels—a very demanding resolution for the era—the Lightning cards averaged only 4% to 6% faster performance than the standard models.

The Overclocking Ceiling

Even with aggressive manual voltage adjustments and overclocking, the performance ceiling for the cards was only about 9% higher than stock. For the price premium MSI charged, this was a difficult pill to swallow. The extra power delivery and robust VRMs were effectively overkill for 99% of users who were not using extreme cooling methods.

The Implications: Lessons Learned

The 2011 Lightning experiment highlights a recurring theme in the history of PC components: the difference between "maximum potential" and "practical value."

The Cost of Excellence

The Radeon HD 6970 Lightning launched at approximately 320 Euro, compared to 250 Euro for a standard reference card. The GTX 580 Lightning was priced at 430 Euro, a significant jump from the 380 Euro reference price. In retrospect, the extra cost bought very little in terms of real-world gaming performance.

Noise Management

The most significant failure was the acoustic profile. Even with the Twin Frozr III cooler, the fans were loud under load. Many users found themselves having to manually tune the fan curves just to achieve a bearable acoustic environment. The fact that the card required such manual intervention to perform as expected—despite the "premium" label—alienated many enthusiasts who expected a "plug-and-play" flagship experience.

Conclusion: A Legacy of Niche Appeal

Ultimately, the MSI Radeon HD 6970 and GeForce GTX 580 Lightning were not cards for the average gamer. They were prototypes for extreme overclocking, packaged and sold to the general public. While they served as an excellent platform for those looking to push silicon to its absolute limit, they failed to offer a compelling value proposition to the broader market.

The "Lightning" brand would go on to evolve, learning from these early mistakes by focusing more on thermals and acoustic efficiency in later generations. However, the 2011 models stand as a monument to a time when manufacturers were still experimenting with how to market "enthusiast" gear to a growing gaming audience. They remain a fascinating piece of history, serving as a reminder that more phases, more switches, and more marketing hype cannot replace the need for a balanced, well-optimized product.

For those who still hold onto these cards in their retro builds, they serve as a unique artifact of a time when the GPU war was fought not just in frames per second, but in the number of capacitors on a PCB and the audacity of the marketing claims.