The landscape of mobile computing is undergoing its most radical transformation since the introduction of the touchscreen. With the upcoming release of Android 17, Google is shifting the paradigm from an "app-centric" model—where users manually navigate through isolated software silos—to an "agent-centric" model. By deeply integrating Gemini Intelligence into the core of the operating system, Android is evolving into a proactive digital agent capable of autonomous decision-making and cross-app orchestration.

Main Facts: A New Era of Automation

Google’s strategy, summarized by the mantra "AI is the new UI," aims to remove the friction of manual interaction. Android 17, powered by Gemini Intelligence, will prioritize the AI’s ability to execute complex tasks on behalf of the user.

The deployment of these features begins this summer, with Google Pixel and Samsung Galaxy devices serving as the initial launch platforms. However, Google’s vision is platform-agnostic, intending to bring these capabilities to smartwatches, automotive infotainment systems (Android Auto), and the emerging category of "Googlebooks"—notebooks utilizing an Android-based tech stack.

Key highlights of the update include:

- Cross-App Orchestration: Gemini can now string together multiple actions across different applications. For example, it can extract a grocery list from a note-taking app, populate a cart in a delivery service, and finalize the order with minimal user intervention.

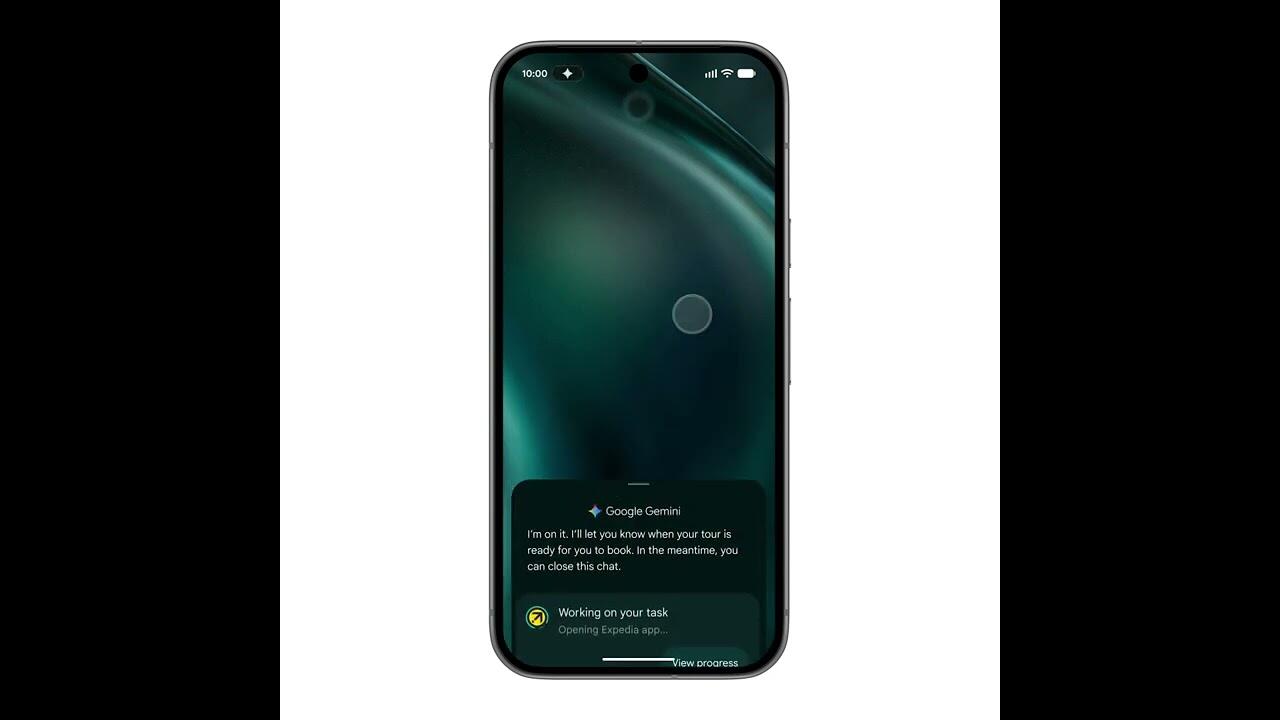

- Contextual Awareness: By opting into screen analysis, Gemini can "see" what is on the user’s display, allowing it to assist with tasks based on real-time visual context, such as booking tours based on a photo of a brochure.

- Intelligent Autofill: "Autofill with Google" now leverages Gemini to navigate complex, multi-field forms, pulling data directly from the device’s secure local storage.

- Generative Widgets: Using simple natural language prompts, users can create custom widgets tailored to their specific needs, such as a specialized weather monitor or a dynamic recipe tracker.

Chronology: The Path to Agentic Intelligence

The journey toward this release has been a calculated progression by Google:

- February 2024: Google introduced multi-step request handling in the US and South Korea, marking the first real-world test of Gemini’s ability to execute background tasks.

- Pre-Summer 2025: Beta testing of "Gemini Intelligence" refined the agent’s ability to interact with third-party APIs, ensuring that actions like booking sport classes or organizing emails were not only possible but secure.

- Summer 2025 Launch: The official rollout of Android 17 begins. The rollout is phased, starting with flagship Google and Samsung hardware, followed by a broader ecosystem update.

- Late 2025 and Beyond: Integration of the "Quick Share" protocol into third-party manufacturers (Honor, OnePlus, Oppo, Xiaomi) and the expanded support for eSIM transfers between iOS and Android.

What is an AI Agent?

To understand the significance of Android 17, one must distinguish between a traditional chatbot and a modern "AI Agent." While a chatbot is reactive—waiting for a prompt and responding with text—an agent is teleological, meaning it is goal-oriented.

An agent perceives its environment, evaluates the best path to achieve a user’s objective, and executes the necessary actions across multiple interfaces. If a user asks an agent to "plan a trip for six people," the agent doesn’t just suggest a destination; it checks calendars, accesses hotel booking apps, coordinates with group members, and prepares the itinerary. Crucially, the agent remains a servant to the user: it performs the "logistics," but reserves the final, critical confirmation for the human, ensuring that control remains firmly with the user.

Supporting Data: Enhancing the User Experience

Beyond the core AI agent, Android 17 brings significant quality-of-life improvements that leverage AI at the hardware-software interface.

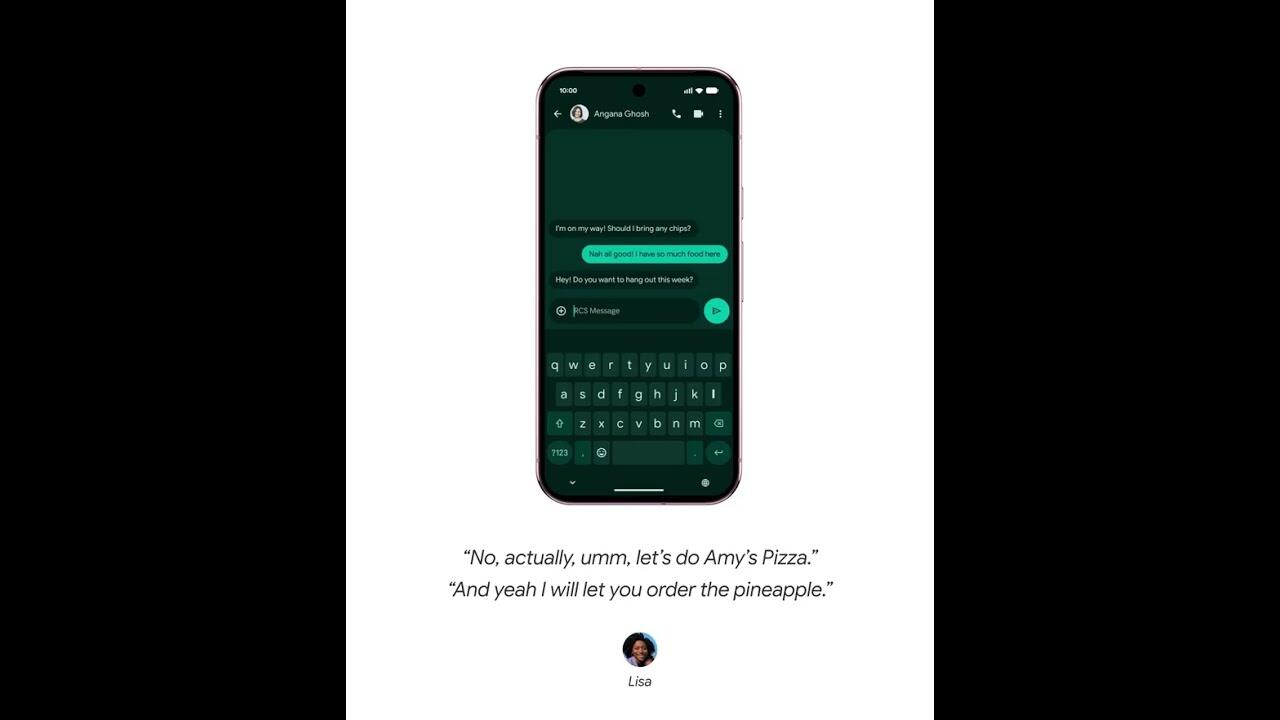

Gboard and "Rambler"

Communication is being streamlined through the "Rambler" upgrade to Gboard. By utilizing natural language processing to filter out disfluencies like "um," "ah," or repetitive fillers, the system allows for more authentic, yet polished, dictation. Users no longer need to speak in rigid, pre-planned sentences; the AI handles the refinement of speech into coherent text in real-time.

Multimedia and Content Creation

Android 17 introduces "Screen Reactions," a feature allowing users to record themselves as an overlay on top of screen captures without the need for a green screen or complex video editing software. Furthermore, the Instagram experience on Android is seeing a massive overhaul, supporting Ultra-HDR uploads, improved video stabilization, and native tablet optimization, finally closing the feature gap that has long existed between Android and iOS.

Professional Tools

Adobe Premiere is arriving on Android this summer, bringing professional-grade video editing to the platform. This launch is specifically optimized for short-form content, with built-in templates and effects designed for YouTube Shorts, supported by the advanced APV (Advanced Professional Video) codec.

Official Responses and Privacy Considerations

Google has been vocal about the privacy implications of these features. Central to the design of Gemini Intelligence is the "Opt-in" requirement. Whether it is reading the screen to assist with a booking or accessing personal data to fill out a form, nothing happens without explicit user permission.

Google’s official statement on the agentic workflow is clear: "Gemini only acts on your command and stops the moment the task is complete. All that’s left for you is the final confirmation." This "Human-in-the-loop" design is a strategic choice to mitigate concerns regarding autonomous AI behavior.

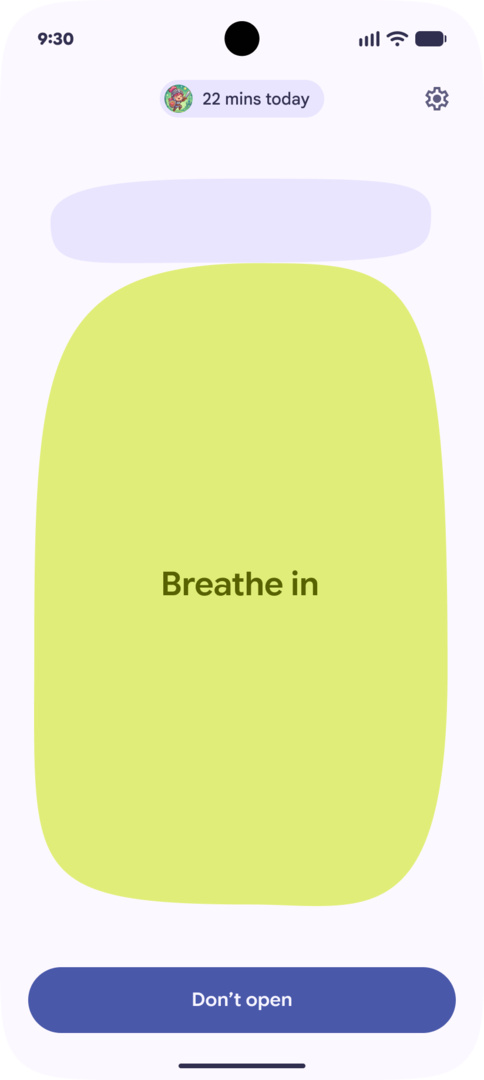

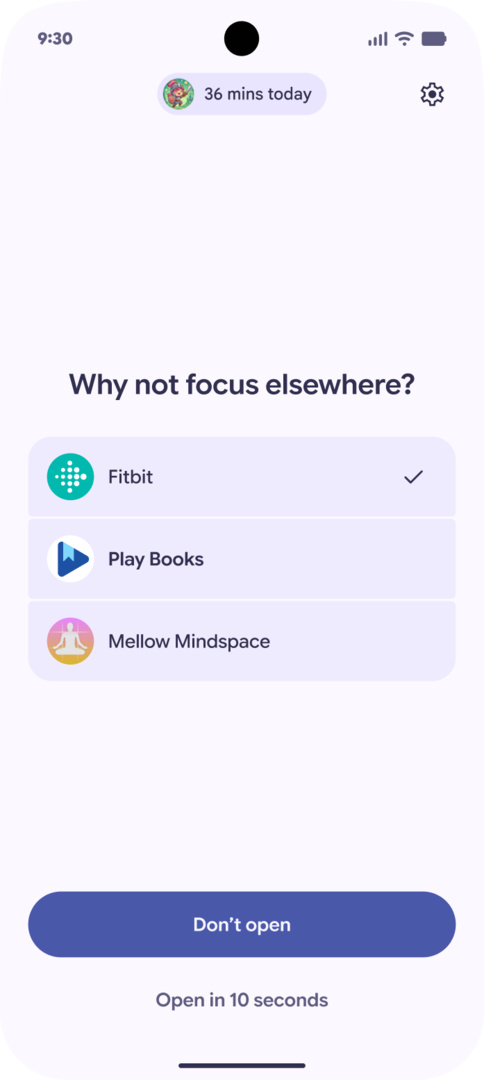

Additionally, the introduction of "Pause Point" demonstrates Google’s commitment to "Digital Wellbeing." By identifying patterns of monotonous or potentially addictive app usage, the system can suggest mandatory 10-second breaks or transitions, a feature that can be locked via system settings to ensure discipline.

Implications: A Shift in the Tech Ecosystem

The implications of this update are profound for both developers and consumers.

For Developers: The era of the isolated "App Silo" is ending. If an app does not provide an API that allows an AI agent to perform tasks within it, it will likely become invisible to the user. Developers must now design for "Agent-Readiness," ensuring their services are discoverable and actionable by Gemini.

For Consumers: The smartphone will become less of a collection of apps and more of a unified interface. The cognitive load required to manage digital life—scheduling, booking, researching, and organizing—will be offloaded to the device. However, this also deepens the user’s reliance on the Google ecosystem, as the efficacy of the agent depends on the depth of the data it has access to.

For the Competition: The integration of a sophisticated agent directly into the kernel of the operating system poses a significant challenge to Apple. While Apple has its own AI initiatives, Google’s aggressive push into agentic workflows, combined with the cross-platform nature of Android, suggests a future where the OS is defined by its intelligence rather than its visual aesthetics.

Android 17 represents a watershed moment. It is the transition from a device that requires constant instruction to a companion that understands intent. As we move into the summer of 2025, the question is no longer "what apps do I have?" but rather "what can I ask my phone to do?"