In a move designed to deepen user trust while simultaneously raising significant questions regarding digital safety, Meta has announced the rollout of an "Incognito Chat" mode for its Meta AI chatbot within WhatsApp. As AI integration becomes ubiquitous across Meta’s suite of applications, this new feature promises a walled-garden experience where user inputs are shielded from both the company’s internal training pipelines and human oversight.

While proponents argue that this is a necessary evolution for users dealing with sensitive financial, medical, or professional inquiries, critics warn that the feature may create a "black box" environment where misinformation and harmful guidance can flourish unchecked.

The Core Mechanism: What is WhatsApp’s Incognito Mode?

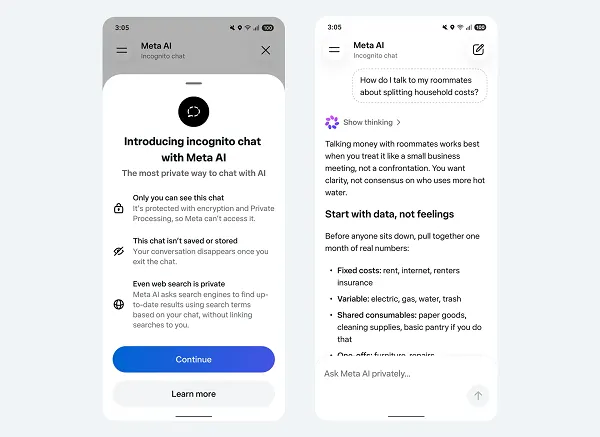

The premise behind Meta’s Incognito Chat is simple: to provide a space where users can query artificial intelligence without the lingering footprint of data collection. According to Meta, this mode utilizes a secure, private processing environment. When a user engages with the chatbot under these parameters, the conversations are not stored on Meta’s servers, nor are they utilized for the ongoing training of the Large Language Models (LLMs) that power the assistant.

Meta characterizes this as a "private, temporary conversation." By default, messages are ephemeral—disappearing once the session concludes—and the company claims that even its own engineers cannot access the content of these exchanges. This is a significant shift from the standard operational model of most generative AI, where user prompts are typically harvested, anonymized, and fed back into the system to "refine systematic understanding and response."

A Chronology of Meta’s AI Integration

The trajectory of Meta’s AI ambitions has been aggressive, moving from experimental beta testing to full-scale deployment across its ecosystem in a matter of months.

- Early 2023: Meta begins restructuring its internal teams to focus heavily on "Generative AI" initiatives, shifting resources from the Metaverse to compete with OpenAI and Google.

- Mid-2023: Initial testing of Meta AI in select regions begins, primarily focused on Instagram and Facebook Messenger.

- Late 2023: WhatsApp begins integrating AI chatbots into the user interface, allowing users to ask questions directly within their messaging threads.

- Early 2024: Concerns regarding data privacy begin to mount as users express hesitation about sharing sensitive work or health data with a chatbot that is perpetually "learning."

- Current Phase: The announcement of "Incognito Chat" and "Side Chat" features signals Meta’s intent to address the "privacy friction" that has thus far prevented mass adoption for sensitive queries.

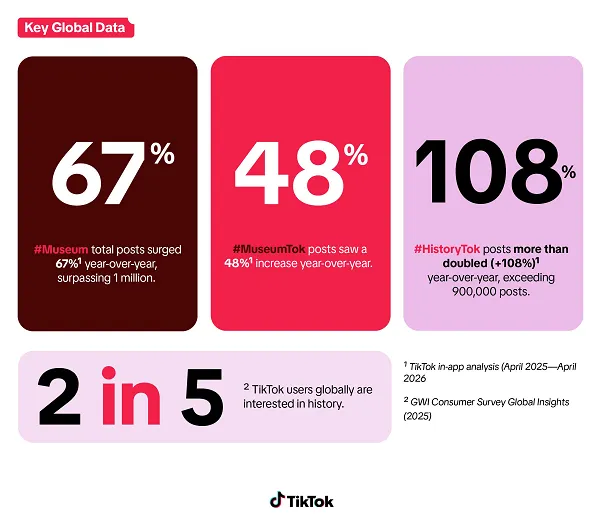

Supporting Data: The Rising Demand for AI Consultation

Data suggests that users are increasingly turning to AI as a first-line search engine, bypassing traditional browser-based search methods. According to internal reports from Meta, the volume of queries regarding personal finance, career planning, and medical symptoms has surged.

The utility of these chatbots is undeniable. AI can synthesize vast amounts of information, draft emails, summarize complex documents, and offer programming assistance in seconds. However, this convenience creates a "reliance trap." As users treat chatbots as personal advisors, the sensitivity of the data being shared increases proportionally. A study by the tech analytics firm Global Data Insights found that 62% of users are "concerned about the long-term storage of their AI prompts," confirming that privacy is the primary barrier to deeper AI integration in professional and personal workflows.

Official Responses and Meta’s Stance

Meta’s official documentation frames the move as a proactive response to user feedback. In a recent blog post, the company noted:

"Chatting with AI has quickly become a critical part of how people get information and ask important questions. Many of these questions can be deeply sensitive… [We are providing] a space to think and explore ideas without anyone watching."

The company emphasizes that the "Incognito" architecture is specifically designed to satisfy users who want the power of LLMs without the corporate oversight. By decoupling the chatbot from the training loop, Meta believes it can attract a demographic of users who have otherwise avoided AI due to privacy anxieties.

Implications: The Accuracy and Safety Dilemma

While the privacy benefits are clear, the implications of a "blind" AI system—one that operates without internal monitoring or oversight—are profound.

1. The Hallucination Risk

Generative AI models are fundamentally probabilistic, not deterministic. They predict the next word in a sequence based on training data, which leads to the phenomenon known as "hallucination," where the model asserts false information with absolute confidence. If Meta has no way to review these interactions (because they are encrypted and deleted), it becomes impossible for the company to track where their model is failing. This lack of data prevents developers from identifying systematic biases or factual errors that could misinform users on critical health or legal matters.

2. Regulatory and Ethical Blind Spots

The most alarming implication involves the potential for misuse. Without monitoring, how will Meta enforce its own "Safety Guidelines"? If a user utilizes the Incognito mode to generate instructions for dangerous activities—such as the production of illicit substances, the creation of weaponry, or methods for financial fraud—the system will have no mechanism for intervention.

Ethicists are already sounding the alarm. If an AI provides dangerous medical advice that leads to physical harm, and there is no record of the conversation, accountability becomes legally and technically impossible. This "privacy-by-default" approach effectively grants the AI a level of autonomy that, in the wrong hands, could bypass the safety guardrails that Meta has spent millions of dollars implementing.

3. The "Side Chat" Utility

In addition to Incognito mode, Meta is introducing a "Side Chat" feature, which allows the AI to function as an assistant within existing conversations. This feature is designed to reduce the friction of toggling between a chat with a friend and a chat with an AI. While this increases productivity, it also blurs the line between human communication and machine-generated content, potentially leading to more nuanced forms of social manipulation or reliance on AI to mediate human relationships.

The Road Ahead: Privacy vs. Accountability

The tension between privacy and safety is the defining challenge of the current AI era. Meta’s decision to prioritize absolute privacy in its Incognito mode is a strategic attempt to win user trust, but it may come at the expense of necessary safety protocols.

If Meta is to succeed, it must find a way to verify the accuracy and safety of its AI responses without compromising the privacy of its users. This might involve moving toward "on-device" processing, where the AI runs locally on the user’s hardware, or developing more robust, non-intrusive safety filters that operate in real-time without storing user data.

As it stands, the rollout of Incognito Chat represents a high-stakes gamble. By giving users a "space to think and explore ideas without anyone watching," Meta is opening a digital frontier that is as potentially transformative as it is inherently risky. For the average user, the convenience will be the primary draw; for regulators and safety advocates, the absence of oversight will remain a significant cause for concern.

Whether this feature sets a new standard for private AI or serves as a cautionary tale remains to be seen. What is certain is that the era of "private AI" has arrived, and it brings with it a host of ethical questions that the tech industry is only beginning to address.