In a strategic pivot that signals the maturation of its wearable hardware ecosystem, Meta has officially opened its "Display" smart glasses platform to third-party developers. By inviting external creators to build mobile and web-based applications for the device, the tech giant is moving beyond the initial "proof-of-concept" phase of its AI-powered eyewear and into a period of aggressive utility expansion. This milestone, announced via the Meta developer blog, marks a significant shift in how users will eventually interact with digital information in real-world environments.

Released in September, Meta’s Display glasses represent the company’s most sophisticated hardware to date. Unlike its predecessor models, which focused primarily on capturing photos or simple voice-activated assistance, the Display glasses feature an integrated heads-up display (HUD) and are paired with a proprietary wrist-worn Neural Band. By granting developers access to these tools, Meta is attempting to lay the groundwork for an era where the smartphone is no longer the primary gateway to the digital world.

The Core Facts: A New Frontier for Application Development

The developer preview program allows creators to build experiences that leverage the unique spatial and sensory capabilities of the Display glasses. Meta is enabling developers to utilize familiar programming environments, allowing them to either extend existing iOS and Android mobile applications or architect entirely new, glasses-first experiences.

Central to this new developer toolkit is the integration of the Neural Band. This wrist-worn peripheral detects subtle nerve signals and micro-movements, allowing users to control the device through nearly imperceptible gestures. This breakthrough removes the necessity for voice commands—which can be socially awkward or ineffective in loud environments—and physical touchscreens, which are inherently limited by their 2D nature.

For developers, this means designing interfaces that exist in a "spatial" context. Instead of looking down at a screen, a user might see information overlaid on their physical environment. Whether it is a professional technician needing a wiring diagram while keeping their hands free, or a hobbyist receiving real-time guidance on a craft, the applications are restricted only by the ingenuity of the development community.

Chronology: From Concept to Open Ecosystem

The journey to this moment has been characterized by iterative releases and strategic hardware refinement.

- Pre-2024: Meta’s early experiments with Ray-Ban collaborative glasses focused on social sharing and basic AI audio assistance.

- September 2025: The official launch of the Meta Display glasses. The device debuted with a focus on its heads-up display capabilities and the introduction of the Neural Band, positioning it as a direct competitor to future augmented reality (AR) hardware.

- Late 2025 (The Current Phase): Meta begins the gradual rollout of its developer preview, inviting a select cohort of third-party partners to experiment with the SDK (Software Development Kit).

- 2026 and Beyond: Meta has hinted at a more robust launch of full-scale AR glasses. The current Display developer push acts as a foundational training ground for the developers who will populate that future ecosystem.

Supporting Data: Why Gesture Control Changes Everything

The shift away from traditional input methods is not merely an aesthetic choice; it is a functional necessity. Data from human-computer interaction (HCI) studies consistently show that "friction" is the primary deterrent to long-term wearable adoption.

If a user must reach into their pocket for a phone, unlock it, open an app, and then search for information, the latency of that interaction breaks the user’s flow. Meta’s approach, specifically the Neural Band, seeks to reduce this latency to near-zero.

According to Meta’s technical documentation, the Neural Band utilizes electromyography (EMG) sensors. By translating the electrical signals in the user’s wrist into digital inputs, the glasses can interpret a flick of a finger or a subtle squeeze as a click, scroll, or selection. This "discrete interaction" model is essential for the glasses to become a socially acceptable, all-day wearable. If a user does not need to talk to their glasses or wave their arms in public, the barrier to adoption drops significantly.

Official Responses and Visionary Applications

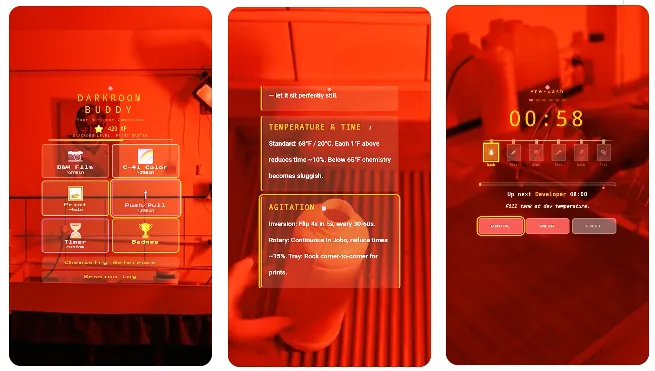

Meta CTO Andrew Bosworth has been the primary evangelist for this new interface model. By sharing glimpses of projects like "Darkroom Buddy," Bosworth is attempting to illustrate the "in-situ" value proposition of the hardware.

"Darkroom Buddy" is a prime example of the utility Meta envisions: an application that detects the user’s environment and provides real-time, context-aware information. In the case of a darkroom, the glasses might overlay exposure times, chemical temperatures, or step-by-step instructions directly over the physical trays and equipment.

In an official statement, Meta noted: "For developers, this opens up a new unique interaction model that doesn’t rely on touchscreens, voice, or capacitive touch. You can design experiences that respond to simple gestures, enabling more discrete, immediate control in real-world contexts without speaking or reaching for the glasses."

This vision is shared by an increasingly large team of researchers at Meta’s Reality Labs, who argue that the future of computing is not found on a screen, but in the ambient digital layer that covers our physical world.

Implications: The Road to True AR

The decision to open the platform is a clear signal that Meta is preparing for its next major hardware jump: dedicated AR glasses. Industry analysts have long suggested that the "Display" model is a bridge device—a way to get the public comfortable with wearing computer-integrated eyewear before the full-blown AR glasses arrive in 2026.

The Shift in App Economics

The opening of this platform will inevitably force a rethink of app economics. Current mobile app stores are dominated by scrolling feeds and swipe-based interactions. The "Display" ecosystem will require an entirely new UX/UI language. Developers who master "gesture-first" and "context-aware" design today will likely hold a competitive advantage when the AR hardware market truly explodes in the coming years.

The Privacy and Social Challenges

While the potential for productivity is immense, the widespread use of smart glasses carries significant social and privacy implications. Meta is aware of these hurdles, as evidenced by the emphasis on "discrete" and "socially-friendly" controls. However, the introduction of third-party apps also opens the door to third-party data collection. Ensuring that developers adhere to strict privacy standards will be a primary focus for Meta’s compliance teams as the program expands.

The Competitive Landscape

Meta is not alone in this race. With rumors of Apple’s continued investment in spatial computing and various startups pushing AR headsets, the competition to define the "operating system of the face" is heating up. By inviting developers now, Meta is attempting to build a "moat" around its ecosystem—the more developers that learn to build for the Meta glasses today, the less likely they are to switch to a competitor’s platform tomorrow.

Conclusion: The Future of Ambient Computing

The launch of the Meta Display developer preview is more than just a software update; it is the official start of the "wearable computing" era. By moving from a closed, first-party product to an open, developer-supported ecosystem, Meta is betting that the most useful applications for smart glasses haven’t been imagined yet.

As the company gradually expands access to its development program over the coming weeks, the tech industry will be watching closely. If Meta succeeds in creating a robust, gesture-driven app library, the "Display" glasses may well be remembered as the device that finally moved computing off the screen and into the world around us.

Whether this leads to a utopian vision of augmented efficiency or a new set of challenges regarding privacy and digital distraction remains to be seen. However, one thing is certain: the era of the smartphone as our sole digital companion is nearing its sunset, and the era of the wearable assistant has officially begun.