In the high-stakes theater of modern cybersecurity, data is the ultimate currency. For threat intelligence firms, AI researchers, and antivirus developers, the ability to study, categorize, and preempt malicious code is the primary line of defense against global cybercrime. Recently, a public discourse sparked by a simple comparison of repository sizes offered a rare, tangible glimpse into the sheer scale of the world’s malware problem.

The conversation began on X (formerly Twitter) when the research collective vx-underground—widely recognized for maintaining one of the internet’s most comprehensive archives of malware source code—disclosed that their collection had reached a staggering 30 terabytes. The post drew an immediate response from Bernardo Quintero, founder of VirusTotal, the industry-standard service that aggregates malware scans from dozens of antivirus engines. Quintero revealed that VirusTotal’s repository, fueled by years of global user contributions, stands at approximately 31 petabytes.

To the layperson, these numbers are abstract, lost in the prefix-heavy jargon of modern computing. However, when translated into physical space, the contrast is startling: 31 petabytes is roughly 1,000 times larger than 30 terabytes. To understand what this means for the future of digital defense, we must look past the binary and visualize the physical footprint of these data giants.

A Chronology of Accumulation: From Viruses to Petabytes

The history of malware collection is a history of the internet itself. In the early 1990s, malware was a boutique concern—a hobbyist’s curiosity that could fit on a handful of floppy disks. As the internet matured, so did the sophistication and frequency of attacks.

The evolution of these repositories reflects this trajectory:

- The Early Era: Malware samples were collected by small groups of enthusiasts on bulletin board systems (BBS). Data was measured in kilobytes, and researchers could track almost every known variant personally.

- The Proliferation Era (2000s): With the rise of high-speed internet and the commercialization of cybercrime, the number of variants exploded. This necessitated the birth of automated scanning services like VirusTotal, which was founded in 2004 to provide a centralized clearinghouse for file analysis.

- The AI and Big Data Era (Present): Today, we are in an age where malware is generated at machine speed. Artificial intelligence is used both to craft polymorphic code that evades detection and to train the defensive models that catch them. The massive datasets held by entities like VirusTotal are no longer just archives; they are the "fuel" for the next generation of predictive security models.

The Physicality of Code: A Thought Experiment

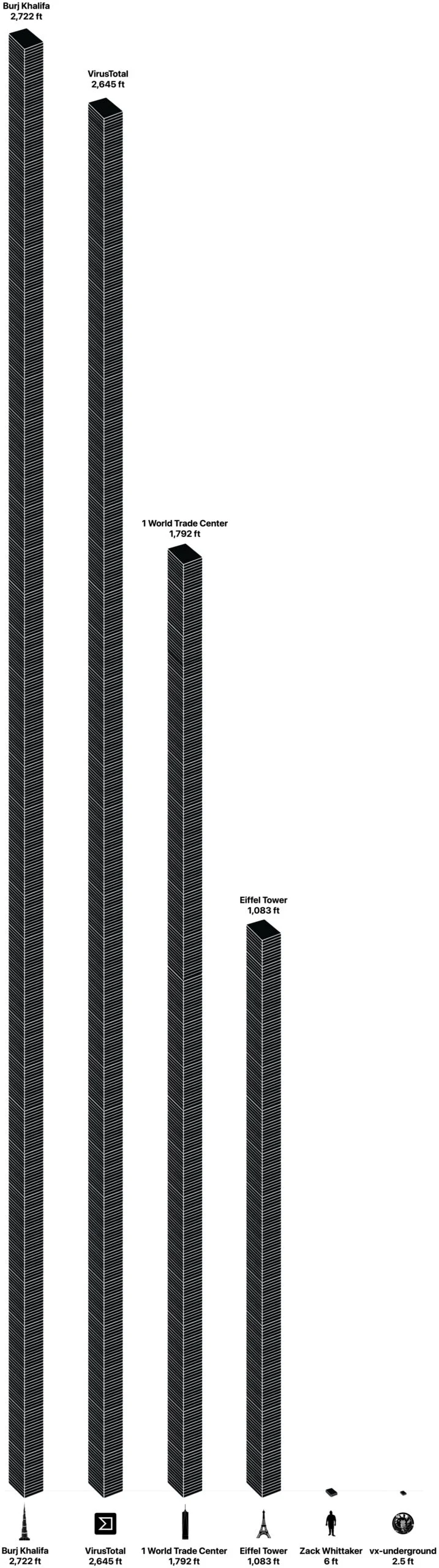

To grasp the scale of 31 petabytes, we must depart from the abstract. If we were to store this data on industry-standard 3.5-inch internal hard drives—the kind found in most enterprise server racks—what would the "tower" of malware actually look like?

For this calculation, we assume a standard 1-terabyte (TB) capacity drive, which has a physical height of exactly one inch.

The vx-underground Archive

With 30 terabytes of data, the vx-underground repository would require 30 hard drives. Stacked vertically, this collection reaches a height of 30 inches, or 2.5 feet. It is a modest, manageable stack—something one might keep on a desk or a small rack in a laboratory. It represents a curated, high-value collection of source code, often used for deep-dive forensics and academic study.

The VirusTotal Repository

The scale shifts dramatically when we examine VirusTotal’s 31 petabytes. Using the same 1-terabyte-per-drive metric, we are looking at 31,744 individual hard drives.

If these drives were stacked one on top of the other, the resulting tower would stand approximately 2,645 feet tall. To put this into perspective, the Burj Khalifa in Dubai—the tallest building in the world—measures 2,722 feet. The VirusTotal archive, therefore, is nearly the height of the tallest human-made structure on Earth. To use another famous benchmark, this data stack would be roughly two-and-a-half times the height of the Eiffel Tower (1,083 feet).

Supporting Data and Technical Context

It is important to note the technical nuance in these figures. "About" is a generous term in the data storage world. In our calculation, we assumed a 1-to-1 ratio between raw data and disk capacity. In a real-world enterprise environment, storage is rarely that clean. Hard drives contain overhead, filesystem formatting, and parity data. Furthermore, deduplication—a process where redundant files are removed—is essential for large repositories.

If VirusTotal were to store its data without the sophisticated compression and deduplication techniques they employ, the "tower" would likely be even taller. Conversely, if we used high-density enterprise drives (e.g., 20TB drives), the stack would be significantly shorter, though the sheer volume of "malicious intent" represented remains constant.

The divergence between the 30 terabytes held by vx-underground and the 31 petabytes held by VirusTotal highlights a fundamental difference in mission. vx-underground functions as a specialized library for researchers, focusing on the quality and accessibility of source code. VirusTotal acts as a global ingestion engine, capturing the sheer volume of "in-the-wild" samples submitted by security tools, enterprises, and individuals across the globe. Both are vital, but they operate at different orders of magnitude.

Official Responses and Industry Implications

The exchange between the two parties underscored the cooperative, albeit competitive, nature of the cybersecurity ecosystem. When asked about the sheer scale of the data, industry experts point toward the necessity of "training data" as the primary driver for such massive repositories.

Cybersecurity firms are currently locked in an arms race. As attackers integrate AI into their toolkits, defenders must have access to massive, diverse, and well-labeled datasets to ensure their detection algorithms are not caught off guard by novel strains of ransomware or spyware.

"The volume of data isn’t just for vanity," notes one security analyst. "It is the prerequisite for relevance. If you aren’t training your models on the latest variants—and the millions of variants that preceded them—you are essentially fighting a war with an outdated map."

However, this reliance on massive data repositories also introduces operational security (opsec) challenges. The very act of aggregating 31 petabytes of malicious code creates a high-value target. If a threat actor were to gain unauthorized access to such a repository, the potential for "re-weaponization"—taking old, known-vulnerable code and repackaging it for new attacks—is a persistent nightmare for security architects.

The Future: Beyond the Tower

The "tower of malware" is a powerful metaphor for the unsustainable growth of digital threats. As we look toward the future, the industry is grappling with a core question: Is there a limit to how much data we need?

The move toward "edge" detection—where AI models are deployed on the endpoints themselves—suggests that while massive centralized repositories like VirusTotal will always be necessary for deep intelligence, the future of defense will be increasingly decentralized. We are moving toward a world where the "tower" of data is replaced by a vast, distributed network of intelligent sensors that understand the intent of code rather than just its signature.

Until that day, however, we remain in an era of accumulation. The fact that a single, crowdsourced malware repository can rival the height of the world’s tallest skyscrapers is a testament to the scale of the digital conflict we are all currently living through. It is a sobering reminder that for every piece of code we write to build the future, there is an entire skyscraper of code being written to tear it down.

Zack Whittaker is the security editor at TechCrunch and author of the weekly newsletter "This Week in Security." For more insights into the evolving landscape of digital threats, follow his ongoing coverage of the intersection between data, policy, and global cybersecurity.