For millions of individuals worldwide who are deaf or hard of hearing, sign language is not merely a supplemental communication tool—it is a primary language, a cultural cornerstone, and a vital method of self-expression. Yet, a persistent, systemic barrier remains: the vast majority of the hearing population lacks fluency in American Sign Language (ASL) or International Sign Language (ISL). This disconnect has historically relegated the deaf community to the periphery of mainstream communication, relying on cumbersome interpreters or inefficient text-based exchanges.

However, a groundbreaking development emerging from a research team in South Korea may have finally cracked the code. A new study published in Science Advances introduces the WRSLT (Wirelessly connected, Ring-type Sign Language Translator), a non-intrusive, wearable system that translates sign language into text in real time with remarkable precision.

The Core Innovation: Breaking Free from the "Glove" Paradigm

Historically, attempts to bridge the communication gap between signers and non-signers have been hampered by hardware limitations. Previous iterations of sign-language-to-text technology relied heavily on “data gloves”—bulky, wired peripherals laden with external sensors that often restricted the natural fluidity of hand movements. These devices were not only physically cumbersome but also suffered from calibration issues, often requiring a unique setup for every individual user.

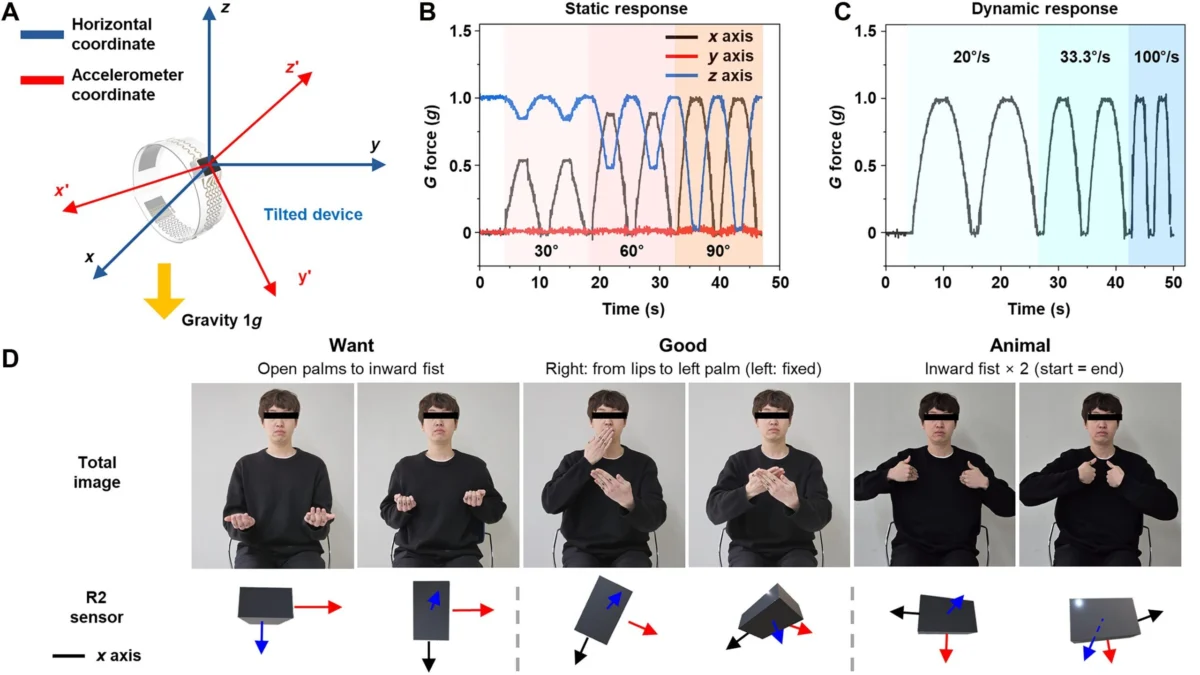

The WRSLT system departs from this model entirely. Instead of a single restrictive garment, the system utilizes a set of compact, wearable smart rings. Each ring is equipped with a high-precision three-axis accelerometer designed to capture the nuance of finger orientation and hand trajectories. By distributing the sensors across multiple digits, the system creates a high-fidelity map of the user’s signing motion, effectively capturing the “syntax” of the hands without requiring the wearer to sacrifice comfort or speed.

How the Technology Works

The mechanics of the WRSLT are deceptively simple:

- Motion Capture: As the user signs, the rings detect subtle changes in finger posture and spatial movement.

- Wireless Transmission: This kinetic data is relayed instantly via a low-latency Bluetooth connection to a host device, such as a smartphone or a laptop.

- AI Interpretation: A sophisticated machine-learning algorithm, trained on thousands of hours of sign language patterns, processes the incoming data, translating the complex gestures into coherent, readable text.

A Chronology of Development: From Concept to Breakthrough

The journey to the WRSLT began with a fundamental question: How can we make sign language technology as unobtrusive as a piece of jewelry?

- Phase I: Data Collection and Mapping: Researchers spent years cataloging the variations in sign language execution. Recognizing that human communication is inherently messy—varying in speed, hand size, and stylistic flair—the team focused on creating an algorithm that prioritized the intent of the sign rather than a rigid, mathematical recreation of the movement.

- Phase II: Hardware Miniaturization: The transition from bulky glove-based arrays to miniaturized ring sensors was the most significant hurdle. The team had to pack significant processing power and long-lasting battery life into a form factor small enough to be worn comfortably on multiple fingers.

- Phase III: The "Generalization" Milestone: A major breakthrough occurred when the researchers successfully trained the system on one group of signers and then tested it on an entirely different demographic. The system’s ability to maintain high accuracy across different users proved that the AI had learned the language of signing, rather than just the habits of specific individuals.

Supporting Data: By the Numbers

The efficacy of the WRSLT is backed by rigorous empirical testing. According to the study, the system achieved an accuracy rate of approximately 88% in real-time translation—a metric that is highly competitive in the field of assistive technology.

| Language | Accuracy Rate |

|---|---|

| International Sign Language (ISL) | 88.5% |

| American Sign Language (ASL) | 88.3% |

These figures represent a significant leap forward, particularly when considering the natural variance in how different individuals sign. Unlike previous systems that required a "training period" for every new user, the WRSLT demonstrated a robust capacity to generalize its understanding, suggesting that the system could be "plug-and-play" for a wide range of users right out of the box. Currently, the vocabulary is limited to 100 core words per language, but the researchers are already working on expanding this dictionary to include complex phrases and contextual colloquialisms.

The Human Element: Implications for Global Accessibility

The implications of this technology extend far beyond the convenience of text conversion. By providing a reliable, portable, and discreet translator, the WRSLT has the potential to democratize access to public services, educational environments, and social interactions for the deaf and hard-of-hearing community.

Reducing Social Friction

In professional settings—such as hospitals, legal offices, or classrooms—the reliance on human interpreters, while necessary, can sometimes introduce a delay or a sense of "third-party" intrusion into private matters. While the WRSLT does not replace the nuance and cultural depth of a human interpreter, it serves as a critical bridge for day-to-day interactions, empowering deaf individuals to interact with the world on their own terms.

The Future of Wearables

The success of the WRSLT also signals a shift in the broader wearables market. As AI integration becomes more sophisticated, we are moving away from screens and toward "ambient computing." The idea that a simple accessory—a ring—can act as an interface for complex, life-altering translation suggests that future assistive technology will be increasingly invisible and integrated into our daily attire.

Official Responses and Peer Perspectives

While the scientific community has praised the study, experts in assistive technology urge a measured approach. Dr. Elena Vance, a lead researcher in human-computer interaction (HCI), notes: "The WRSLT is a triumph of engineering. However, the true test will be moving from a controlled lab environment to the chaotic reality of everyday life. Sign language is not just about the hands; it involves facial expressions, body posture, and context. The next iteration must incorporate these elements to truly capture the soul of the language."

The research team in South Korea has acknowledged these limitations, noting that they are currently working on a "version 2.0" that will feature even more miniaturized sensors and potentially incorporate vision-based AI to capture facial cues, further closing the gap between machine translation and human communication.

Looking Ahead: The Path to Market

While the WRSLT is still in its research and development phase, the buzz surrounding the technology suggests that commercialization may be on the horizon. The goal is to make the system affordable and accessible. If the researchers can successfully scale the production of these rings, it could mark the beginning of a new era for accessibility technology.

The researchers emphasize that they are not just building a product; they are building a bridge. "Language is the foundation of community," the team noted in the study’s conclusion. "If we can use technology to make that foundation accessible to everyone, regardless of their hearing status, we have not just built a better gadget—we have built a more inclusive world."

As we look toward the future, the WRSLT stands as a beacon of what is possible when cutting-edge AI and empathetic design collide. For now, it remains a "magic" solution to an age-old problem, but as the vocabulary expands and the hardware shrinks, it is poised to become an essential tool in the global movement toward universal accessibility.

Related Developments in the 2026 Tech Landscape

The breakthrough with the WRSLT is part of a broader wave of innovation currently hitting the tech sector. As seen at this year’s major industry showcases, the industry is pivoting toward "invisible AI."

- The Android Show 2026: Google’s recent pre-I/O event highlighted a massive push toward deep AI integration, with the company showcasing a new category of "Googlebook" laptops and a revamped Android Auto experience designed to make technology feel more like an extension of the user.

- The Magic Pointer: Similarly, Google’s introduction of the "Magic Pointer"—which uses AI to allow cursors to understand and interact with the content they are pointing at—mirrors the logic of the WRSLT. Both technologies seek to reduce the "steps" between human intent and digital execution.

- Gemini Intelligence: With the rollout of Gemini across Android devices—including cars, glasses, and wearables—the infrastructure to support complex, real-time translations like the WRSLT is becoming more robust and ubiquitous.

In an age where artificial intelligence is often criticized for creating distance between people, it is refreshing to see technologies that aim to do the exact opposite: to bridge the silence, facilitate understanding, and ensure that the most important human tool—communication—remains accessible to all.