As Meta finds itself embroiled in a high-stakes legal confrontation with the state of New Mexico, the tech giant is launching a new wave of safety measures aimed at its youngest users. The move, characterized by the company as a proactive step toward digital safety, arrives at a critical juncture where the company’s very presence in the American Southwest is being questioned by regulators and judicial authorities alike.

The New Safety Offensive: A Shift in Strategy

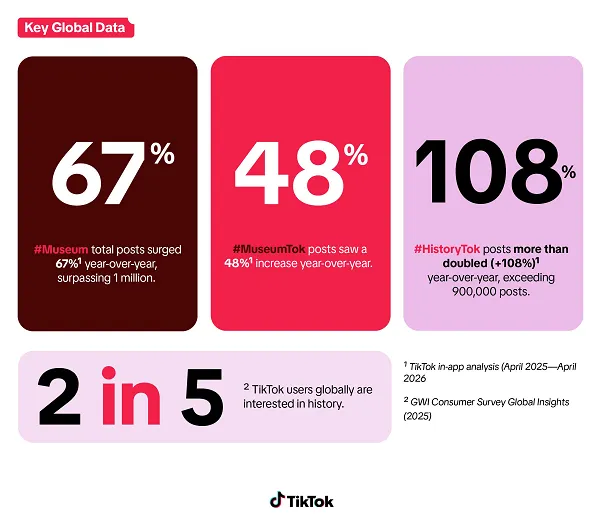

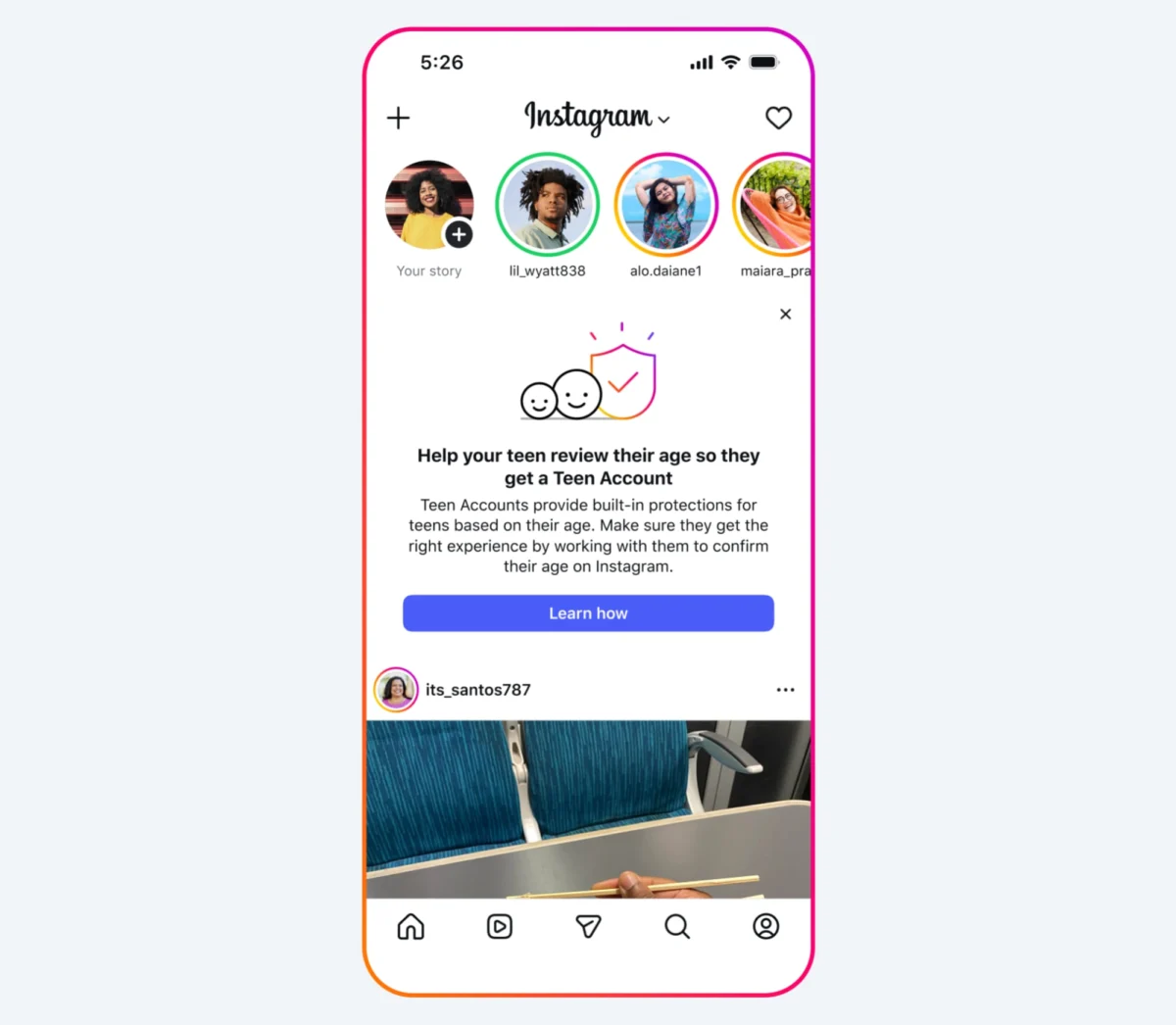

In a recent blog post, Meta announced a multi-pronged approach to bolstering age-related protections on Facebook and Instagram. The core of this initiative involves a broad notification campaign targeting parents across the United States. Unlike previous iterations of parent-focused tools, which were largely confined to those using formal supervision features, this new notification is being pushed to any user Meta identifies as a parent.

These users will receive guidance on how to monitor and confirm their children’s ages within the platform. The notifications include direct links to resources developed by experts, such as Dr. Lockhart, which emphasize the psychological and safety benefits of children providing their accurate age. By incentivizing parents to "truth-check" their children’s accounts, Meta hopes to shrink the gap between the actual demographic of its users and the data currently held in its systems.

Beyond the U.S., Meta is aggressively expanding its AI-driven age-detection technology. This system, which began deployment in April 2025, uses machine learning to identify users who may have lied about being adults. When the AI flags a discrepancy, it automatically re-assigns the user to a "Teen Account," a restricted tier designed with more stringent privacy and safety guardrails. Meta confirmed this technology is now rolling out across all 27 European Union member states, Brazil, and is being activated for Facebook users in the U.S. for the first time.

Chronology of a Regulatory Conflict

To understand the urgency of Meta’s latest announcements, one must look at the escalating tensions in the New Mexico courtroom.

- March 2026: Meta suffers a landmark legal defeat as a jury finds the company liable for misleading consumers about the safety of its platforms. The jury concludes that Meta willfully endangered children, leading to an initial court-ordered penalty of $375 million.

- April 2025: Meta officially launches its AI-driven age-identification project, intending to curb the prevalence of underage users masquerading as adults.

- Late 2025/Early 2026: Independent experts publish a scathing report analyzing Meta’s "Teen Account" infrastructure. The researchers conclude that the safety features are largely cosmetic, documenting multiple instances where the promised guardrails failed to prevent predatory contact from strangers.

- April 30, 2026: In a dramatic escalation, Meta files court documents threatening to shutter its services—including Facebook, Instagram, and WhatsApp—in the state of New Mexico if the court imposes the proposed "onerous" injunctive relief.

- May 2026: The second phase of the New Mexico trial continues, with the state’s Attorney General, Raul Torrez, pushing for $3.75 billion in additional damages and a total overhaul of Meta’s youth-safety protocols.

The Data Divide: Promise vs. Performance

Meta’s reliance on AI for age detection is not without controversy. While the company touts its ability to analyze "contextual clues"—such as a user’s social circle, activity patterns, and profile metadata—the efficacy of these tools remains a point of contention.

The report published by independent researchers late last year remains the primary piece of evidence against the integrity of Meta’s Teen Accounts. The researchers found that despite the automated guardrails, the platform struggled to distinguish between legitimate social interaction and grooming behaviors. This has led critics to argue that Meta is focusing on "security theater"—implementing visible, parent-facing notifications—while failing to address the fundamental architecture that allows harmful interactions to occur.

Meta, for its part, maintains that it cannot solve the age-verification problem alone. In its recent communications, the company has called on lawmakers to intervene at the app-store level. Their argument is that companies like Apple and Google should be the ones to verify age at the point of download, providing that data to developers as a standardized credential. By shifting the burden of verification to the operating system, Meta argues that the entire tech ecosystem would become more secure.

Official Responses: A Clash of Perspectives

The divide between the company’s internal narrative and the perspective of New Mexico officials could not be starker.

Meta’s legal counsel, Alex Parkinson, has been explicit in court: the demands placed upon the company by New Mexico—which include strict age verification, the banning of all users under 13, and the restriction of end-to-end encryption for minors—are not just difficult, they are "untenable." Parkinson argues that to comply, Meta would have to build a "walled garden" specifically for New Mexico, a technical and financial burden that would force them to withdraw from the state entirely.

Attorney General Raul Torrez has dismissed these threats as "corporate bullying." In a recent statement, Torrez emphasized that the issue is not one of technological capability, but of corporate priority. "We know Meta has the ability to make these changes," Torrez said. "This is not about what they can do; it is about what they are willing to sacrifice in terms of advertising revenue to keep children safe."

The state’s position is that Meta’s business model is fundamentally at odds with the protection of minors. By seeking $3.75 billion in damages, the state is attempting to make it more expensive for Meta to ignore safety protocols than it is to implement them.

Implications for the Future of Social Media

The outcome of the New Mexico trial is widely expected to set a precedent for how social media companies are regulated in the United States. If the court succeeds in forcing Meta to implement aggressive age verification, other states are likely to follow suit with similar, if not more stringent, requirements.

The "Siloed Internet" Risk

Meta’s threat to withdraw from New Mexico raises the specter of a "balkanized" internet. If major platforms begin pulling out of jurisdictions that pass strict safety laws, the result could be a fragmented digital landscape where users in different regions have vastly different access to the global social web. While proponents of safety argue that this is a necessary cost to protect the next generation, critics warn that it could stifle connectivity and innovation.

The AI Dilemma

The trial also highlights the limitations of relying on Artificial Intelligence to enforce human-centric laws. As Meta continues to roll out AI-based age detection globally, the company is effectively beta-testing a system that will be scrutinized under the microscope of judicial review. If the AI continues to misidentify users or fail to stop inappropriate contact, the legal liabilities for Meta will only increase.

A Turning Point for Big Tech

Ultimately, the battle between New Mexico and Meta represents the end of the "self-regulation" era for Big Tech. For years, companies like Meta operated under the assumption that they could manage user safety through internal policies and "best practices." The current legal environment suggests that those days are coming to a definitive end.

As the second phase of the trial continues, all eyes are on the judiciary’s next move. Whether the court forces Meta’s hand, leading to a massive overhaul of its global operations, or whether Meta succeeds in characterizing the state’s demands as "technologically infeasible," one thing is clear: the relationship between social media platforms and their youngest users is undergoing a forced, painful, and permanent transformation. The era of "move fast and break things" is being replaced by an era of "prove it and protect them," and the cost of this transition is measured in billions of dollars and the very future of digital access.